In a recent Premier League game, Manchester United went 2-0 up when striker Marcus Rashford ran on to a pass and slotted the ball past Liverpool’s goalkeeper, Alisson Becker. The game was then held up briefly while the “video referee” checked whether Rashford was ahead of the last defender, Joe Gomez, when the pass was made. The difference between onside and offside—between goal or no goal—can be tiny:

Marcus Rashford was onside for Manchester United’s second goal due to the tolerance level which was added to VAR offside last summer.

Would have been offside in 2020-21.

When a player is onside due to tolerance level one green line is shown, drawn to the defender.

#MUNLIV pic.twitter.com/3SRpOPX7fN

— Dale Johnson (@DaleJohnsonESPN) August 22, 2022

Indeed, the margins can be so small that simply placing the camera at a slightly different angle could make a big difference. This problem of camera angles, and how they affect our perception of offside calls, is what encouraged me to use my expertise in 3D motion capture technology to explore the accuracy of video refereeing systems.

Video assistant referee (or VAR) technology was first introduced in 2018 to help referees review decision for goals, red cards, penalties and mistaken identity. Since then the total number of fouls, offsides and yellow cards has decreased.

On the other hand, VAR has increased the total match time while reducing the effective playing time. The final VAR outcome is determined by a human operator in an office far from the stadium—who may of course be prone to human error—before being relayed to the on-pitch referee.

Yet another VAR controversy arose recently as the on-pitch referees accepted Newcastle United and West Ham goals against Crystal Palace and Chelsea respectively, only for those goals to be disallowed after VAR reviewed them. These decisions were heavily criticized in the media and now PGMOL, the referees’ body, has promised to “fully co-operate” with a Premier League review of the incidents.

Why offsides are so hard to judge

Law 11 of association football states that a player is in an offside position if any of their body parts except their hands and arms are in the opponents’ half and closer to the opponents’ goal line than both the ball and the second-last opponent (the last opponent is usually, but not necessarily, the goalkeeper).

Referees and assistant referees need to identify the exact moment the ball was kicked and check the position of often fast-moving players at the same time. If in doubt, they can review the video footage of the incident. These videos are often recorded at 30 frames per second, yet the video may still become blurred because the players move so quickly.

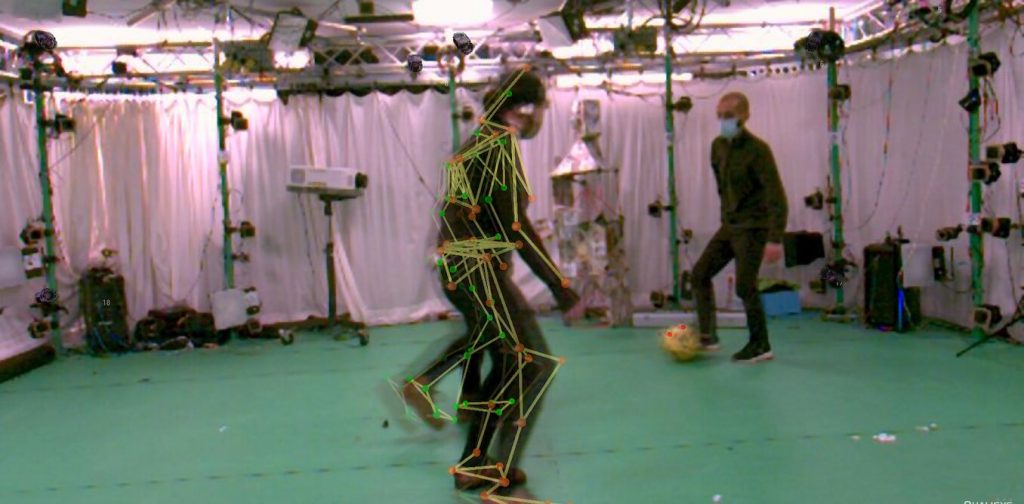

It is therefore unclear whether the current video replay technology is accurate enough to deal with the narrowest offside situations. To find out, I used optical motion capture technology which records the position of the players and the ball in 3D and with higher accuracy, and so can be used to validate the outcomes of 2D video systems.

I created some offside scenarios in a laboratory and asked volunteers to act as the players and the VAR. In each scenario, one player passed the ball to their teammate who was standing next to an opponent.

I placed reflective markers on the players and the ball and recorded their 3D positions with a motion capture system. I also recorded the scenes with video cameras placed at different viewing angles. Then I asked ten college students to watch the pre-recorded events, and to determine the ball-kick moment and identify whether the player was offside.

My results were recently published by the International Society of Biomechanics in Sport. I showed that people on average judge the offside moment as being later than the actual moment the ball was kicked by 132 milliseconds, or 0.13 seconds.

Such a delay may not seem a lot, but in fast-paced games like football, it could be enough to put players in another location and therefore make them offside. For example, assuming that a player is moving at about 8 meters per second, a delay of just 0.13 seconds could correspond to about 1 meter.

When viewing videos taken from 0 and 90 degree angles (from raised positions in line with the players and behind the goalkeeper), participants were more likely to be accurate. At a 45° viewing angle and when the image of the attacker is to the left side of the defender, sometimes the attacker appeared to be closer to the goal line, resulting in wrong offside judgments.

Similarly, when the attacker was on the right side of the defender, even when he was offside, sometimes he appeared to be next to the defender. It seems that these wrong decisions are the results of relative optical projections of the two players at this camera viewing angle.

How to reduce these biases further

As there is still a human element to VAR, it seems impossible to remove all potential errors and biases and achieve 100% accuracy. Nonetheless, there are several things we could do to reduce these biases further. These include higher frame-rate cameras that could determine ball contact and offside moment in slower motion.

For marginal offside decisions, VAR should replace its current one-pixel line with thicker lines to represent the uncertainty zone. Where the lines overlap, those situations could be deemed as onside.

Finally, in case a parallel or perpendicular view of the event is not possible, VAR should be checked with other camera angles. In the longer term, VAR could use “volumetric video” that captures the scene in 3D and can be viewed on flat screens as well as in 3D displays or VR goggles.

These technologies might not ever completely resolve the question of whether or not Rashford was offside—football fans, players and managers love a good argument. But it should not be over millimeters.